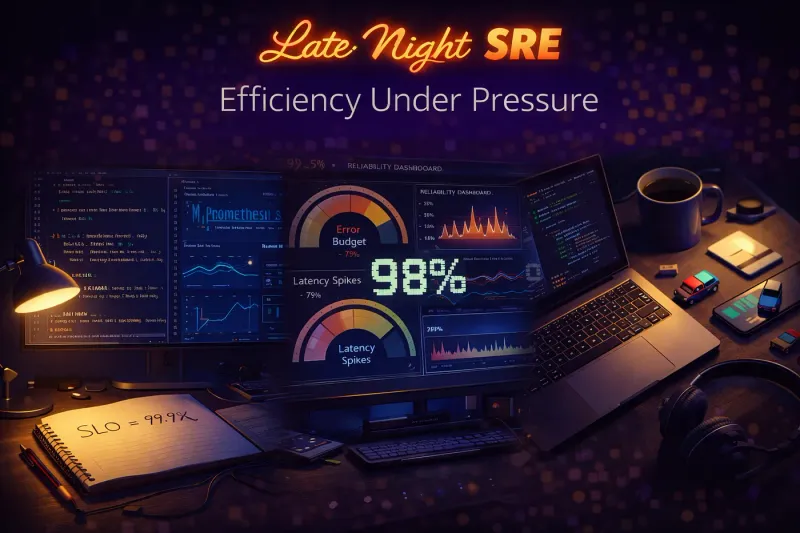

🎙️ Opening Monologue

If you followed my last series (Late Night Terraform), you know the ink on my Terraform certification is barely dry. I thought I’d have time to breathe, but a commitment to my organization pulled me back in: SRE Foundation Certification by Friday.

But here is the reality check: I am a father. I don’t have 120 hours of “study-vacation.” I have exactly 2 hours a night once the house goes quiet and the “Dad” hat comes off.

This isn’t just an exam prep; it’s a lesson in efficiency. When you only have 120 minutes before your eyes give out, every page you read has to count.

📋 The Briefing: Know Your Enemy

I checked the official SRE Foundation page. Here’s the “Intelligence Report” for a time-crunched parent:

- The Format: 40 Multiple Choice Questions.

- The Passing Grade: 65% (I’m aiming for higher).

- The Twist: It’s an Open Book exam.

Open Book. That was the silver lining. It meant I didn’t need to spend my precious 2 hours memorizing definitions. I needed to spend them building a “Search Engine” in my brain so I could find the right answers during the 60-minute exam window.

🛠️ The Strategy: Tactical Efficiency

I attended a training 6 months ago, but that knowledge was buried under months of life and work. I had to be ruthless with my time.

My “2-Hour” Execution Plan:

- Reverse Engineering: I didn’t start with the textbook. I started with the sample exam questions. I needed to see the “shape” of the questions so I didn’t waste time studying things that wouldn’t be tested.

- The AI Wingman: I narrated my thoughts into my phone while doing chores or commuting, letting AI structure my notes. By the time I sat down for my 2-hour window, the “busy work” of organizing was already done.

- The “Search Index” Method: Since it’s open book, I focused on knowing where information lived in the trainer’s slides rather than what the information was.

Section 1: SRE Principles and Practices

1. Definition and Origin

- SRE Core: A discipline that incorporates aspects of software engineering and applies them to infrastructure and operations problems.

- The Goal: To create scalable and highly reliable software systems.

- History: Formalized by Ben Treynor Sloss at Google (circa 2003). His famous quote defines it best: “SRE is what happens when you ask a software engineer to design an operations function.”

2. The 50% Rule (The SRE Balance)

- Operational Cap: SRE teams are strictly limited to a maximum of 50% operational work (manual tasks, tickets, on-call).

- Engineering Focus: The remaining 50% must be spent on development tasks (automation, feature addition, scaling) that reduce future operational load.

- System Health: If the “Ops” work exceeds 50%, it is a signal that the system is under-automated or unstable, requiring a shift in focus to “Project” work.

3. SRE vs. DevOps

- The Relationship: SRE is an implementation of DevOps. While DevOps is a broad cultural philosophy, SRE provides the specific metrics and practices to achieve it.

- The Flow: DevOps focuses on moving code from Development to Production (velocity). SRE focuses on bringing insights from Production back to Development (reliability) to close the loop.

4. The Six Core Pillars of SRE

- Codifying Operations: Treating infrastructure and operational procedures as code (IaC and Automated Playbooks).

- Service Levels: Using data-driven metrics (SLIs/SLOs) to define “success” rather than subjective feelings.

- Toil Management: Identifying and eliminating “Toil” — work that is manual, repetitive, automatable, and lacks enduring value.

- Automation: The primary tool for scaling. SREs automate to ensure the system can grow without a linear increase in human effort.

- Reducing Cost of Failure: Using techniques like “Canary Releases” and “Blame-Free Post-mortems” to learn from outages without punishing individuals.

- Shared Ownership: Breaking the wall between Dev and Ops. Both teams share responsibility for the uptime and performance of the service.

Section 2: Service Level Objectives & Error Budgets

1. Defining the SLO (The Target)

- Definition: An SLO is a target level for the reliability of a service over a specific window of time (e.g., 30 days).

- Purpose: It serves as the internal compass for the team, defining what “good enough” looks like for the customer.

- User-Centricity: Effective SLOs must be defined from the user’s perspective. If the users are unhappy but your SLOs are “green,” you are measuring the wrong things.

2. SLI: The Measuring Stick

- Definition: A Service Level Indicator (SLI) is the quantitative measure of a specific aspect of a service’s performance.

- The Relationship: You cannot have an SLO without an SLI.

- Common SLI Types:

- Availability: Percentage of successful requests.

- Latency: Time taken to process a request (e.g., p95 < 200ms).

- Error Rate: The frequency of 5xx status codes.

3. Error Budgets (The Innovation Fund)

- Definition: 100%−SLO=Error Budget100%−SLO=Error Budget. It is the acceptable amount of failure or downtime allowed within a period.

- The Balance: It provides a data-driven way to balance velocity (moving fast) with stability (reliability).

- The Policy: An Error Budget Policy dictates what happens when the budget is exhausted. Usually, feature releases are halted to focus on reliability engineering.

4. Advanced Management & VALET

- Error Budget Policy: A formal agreement between stakeholders that defines consequences for missing targets.

- Progressive Delivery: Using techniques like Canary or Blue/Green deployments to protect the Error Budget during releases.

- Business Protection Period: Specific “freeze” times (like Black Friday) where the Error Budget is guarded more strictly.

- VALET Dimensions: A framework for comprehensive monitoring:

- Volume (Amount of traffic)

- Availability (Is it up?)

- Latency (How fast?)

- Errors (How many failures?)

- Tickets (How much manual intervention?)

5. Business Consequences

Missing an SLO isn’t just a technical failure; it has business impacts:

- Loss of Revenue: Direct impact of downtime.

- Loss of Reputation: Customer trust decreases.

- SLA Violations: If the SLO (internal) drops low enough to hit the SLA (External/Legal contract), it results in financial penalties or credits to customers.

Section 3: Reducing Toil

1. What is Toil?

- Definition: Toil is the kind of work tied to running a production service that is manual, repetitive, automatable, tactical, devoid of enduring value, and scales linearly as a service grows.

- The Litmus Test: If a human has to do it every time it happens, and doing it doesn’t make the system better for the next time, it is Toil.

2. Characteristics of Toil

To identify Toil during the exam, look for these six specific traits:

- Manual: Requires a human to type a command or click a button.

- Repetitive: You’ve done it before, and you’ll do it again.

- Automatable: A machine could do it just as well (if you had the time to write the script).

- Tactical: Interrupt-driven and reactive (e.g., “The disk is full again, clear the logs”).

- No Enduring Value: Once the task is done, the state of the world hasn’t permanently improved.

- Linear Scaling: If you double your service size, you have to double the time spent on this task.

3. Categories of Toil

Common areas where Toil hides:

- Interrupts: Responding to non-urgent alerts or “quick” Slack pings.

- On-Call: High-volume, low-priority pages that don’t require deep engineering.

- Releases and Pushes: Manual steps in a deployment pipeline (the “Human CI/CD”).

4. What is NOT Toil?

It is critical to distinguish Toil from other types of work:

- Engineering Work: Designing new features or building automation to eliminate Toil.

- Administrative Overhead: Filling out timesheets or attending team meetings. While sometimes boring, this is “overhead,” not Toil.

- Deep Troubleshooting: Investigating a brand-new, complex bug. This is creative problem-solving and adds “enduring value” through learning.

5. Why Toil is “Toxic”

Toil is dangerous because of its impact on both the Individual and the Organization:

- Career Stagnation: If an engineer only does Toil, they aren’t learning new skills.

- Burnout: Constant repetitive work leads to mental fatigue and lower morale.

- Slow Velocity: If 90% of your time is spent “keeping the lights on” (Toil), you only have 10% left to build new features.

- Error Prone: Humans are bad at repetitive tasks; eventually, a manual step will be missed.

6. Strategies for Fixing Toil

SREs use three main approaches to eliminate Toil:

- External (Process): Changing how teams interact (e.g., “No manual requests without a ticket”).

- Internal (Automation): Writing scripts, using Terraform, or building self-healing systems to replace manual intervention.

- Service Enhancements: Improving the code itself so the problem doesn’t happen (e.g., adding an auto-log-rotation feature so the disk never fills up).

Section 4: Monitoring and Service Level Indicators

1. The Service Level Indicator (SLI)

- Definition: A quantitative measure of some aspect of the level of service provided.

- The “What”: If the SLO is the goal, the SLI is the measurement.

- **Examples:

- Availability:** (Successful Requests / Total Requests) x 100.

- Latency: Time taken to process a request (e.g., 99th percentile < 300ms).

- Throughput: Number of requests handled per second.

2. Monitoring: The “Watchman”

- Definition: The ongoing process of collecting, aggregating, and analyzing real-time data about a system’s performance and health.

- The Goal: To detect when a system deviates from “normal” behavior based on predefined metrics.

- The “What”: Monitoring answers the question: “Is the system healthy?”

3. The Anatomy of a Monitoring System

To understand how telemetry moves through a system, remember these components:

- Core Engine: The brain that processes and stores incoming data.

- Agents/Exporters: Small software components that collect data from servers or apps.

- Telemetry: The raw data (metrics, logs) being transmitted.

- Anomaly Detection: Logic that identifies patterns outside the baseline.

- Alerting & Paging: The mechanism that notifies a human when a threshold is crossed.

4. Observability: The “Detective”

- Definition: The ability to understand the internal state of a system solely by looking at its external outputs (logs, metrics, traces).

- The Difference: * Monitoring is for “Known-Unknowns” (things you know can go wrong, like high CPU).

- Observability is for “Unknown-Unknowns” (complex, unpredictable failures you didn’t see coming).

- The Goal: Observability answers the question: “Why is this happening?”

5. The Three Pillars of Observability

In the SRE exam, these three data types are the foundation of a modern observability stack:

- Metrics: Numerical data points over time (e.g., CPU is at 70%). Great for dashboards and alerting.

- Logs: Immutable records of discrete events (e.g., “User X failed to login at 2:01 AM”). Great for root cause analysis.

- Traces: Information that follows a single request as it moves through various microservices. Great for finding bottlenecks in complex systems.

6. Why It Matters

- Mean Time to Detect (MTTD): Good monitoring reduces the time it takes to realize there is a problem.

- Mean Time to Repair (MTTR): Deep observability reduces the time it takes to find the root cause and fix it.

- Feedback Loop: Observability provides the data needed to refine SLOs and improve the system’s long-term reliability.

Section 5: Tools and Automation

1. The Purpose of Automation in SRE

- Core Goal: To execute tasks without human intervention.

- Strategic Benefits:

- Eliminating Toil: Freeing up SREs for high-value engineering.

- Improving Reliability: Reducing “fat-finger” errors caused by manual changes.

- Enhancing Efficiency: Directly improving SLIs (like Latency) and protecting SLOs through faster, more consistent responses.

2. The SRE Safety Mindset

- Evaluation of Risk: Automation is a powerful tool that can fail at scale. SREs must build in safeguards:

- Input Validation: Ensuring the automation doesn’t process “garbage” data.

- Timeouts: Preventing a stuck process from consuming all resources.

- Fail-to-Human: Automation must be designed to stop and alert a human if it encounters unsafe or ambiguous conditions. It should never “guess.”

3. SRE-Led Service Automation

This practice shifts the focus toward automating the entire lifecycle of a service:

- Infrastructure: Using IaC (Infrastructure as Code) and CaC (Configuration as Code) to ensure environments are reproducible.

- Testing: Implementing automated functional (does it work?) and non-functional (is it fast/secure?) tests.

- Instrumentation: Ensuring the service is “externally viewable” (Observability) so automation can make informed decisions.

- Growth Envelope: Planning for how automation will handle future scale.

4. The Hierarchy of Automation Types

SRE maturity is often measured by where the team sits on this hierarchy:

- None: Tasks are purely manual. High risk and high toil.

- External: The engineer has a script “on their laptop” or in a home directory. It’s better than manual but requires a human to trigger it.

- Internal: The system has built-in automation (like a cron job or a CI/CD pipeline) that requires little to no human intervention.

- Self-Correcting: The “Holy Grail.” The system detects an issue (e.g., a service crash) and resolves it automatically (e.g., restarting the container or scaling up) without any human input.

5. Emerging Automation Trends

Modern SREs leverage advanced platforms to scale their impact:

- AIOps: Using machine learning to correlate alerts and find patterns in noise.

- Progressive Deployment: Automating “Canary” or “Blue/Green” rollouts to limit blast circles.

- Platform Engineering: Creating internal developer platforms (IDPs) so Devs can self-serve infrastructure safely.

- Generative AI: Using AI to assist in writing automation scripts or summarizing complex incident logs.

Section 6: Antifragility and Learning from Failure

1. The Concept of Antifragility

- Definition: Coined by Nassim Nicholas Taleb, Antifragility refers to systems that do not just survive stress but actually improve because of it.

- SRE Application: It’s about moving beyond “Robustness” (resisting change) to a state where every failure is converted into a permanent improvement in the system’s design.

2. Why We Learn from Failure

Failure isn’t a mistake to be hidden; it’s a source of high-quality data. Learning from it allows teams to:

- Identify hidden architectural weaknesses.

- Make better data-driven decisions on Risk vs. Benefit.

- Improve the overall resilience of the organization.

3. Improving Reliability Metrics through Controlled Failure

We don’t wait for disasters; we create “Controlled Failures” (Chaos Engineering, Fire Drills) to test our metrics:

- MTTD (Mean Time to Detect): Does our monitoring actually fire when a service drops?

- MTTR (Mean Time to Repair): Do we have the automation to fix things fast, or are we stuck in manual toil?

- MTRS (Mean Time to Restore Service): How long to get the entire service back for the user?

- RPO (Recovery Point Objective): In a crash, did we lose more data than the business allows?

4. Cultural Transformation: The Third Way

Shifting from “Fixing” to “Learning” requires The Third Way (from the DevOps Handbook):

- Improve Daily Work: Dedicate time specifically to fix technical debt and automate.

- Reward Risk: Celebrate teams that try new things, even if they fail.

- Safe Experimentation: Schedule time for hackathons or “Game Days” to break things safely.

5. The Westrum Model (Organizational Culture)

The exam often references this model to identify how information flows within a company:

- Pathological (Power-oriented): Fear and tyranny. Messengers of bad news are “shot.”

- Bureaucratic (Rule-oriented): Departmental silos and strict rules. Messengers are ignored.

- Generative (Performance-oriented): The SRE ideal. Risks are shared, and failure leads to inquiry and improvement.

6. Proactive Testing: Fire Drills vs. Chaos Engineering

Both are used to build confidence, but they differ in execution:

- Fire Drills: Planned exercises (often in staging) to simulate BCP (Business Continuity) and DR (Disaster Recovery). It’s about training the humans.

- Chaos Engineering: Deliberately introducing failures into production to see how the system handles real-world unpredictability.

- Note: Chaos engineering is about testing hypotheses, not just “breaking things.”

Section 7: Organizational Impact of SRE

1. Why Organizations Embrace SRE

In the age of social media, downtime is a reputational risk. Organizations move to SRE to gain:

- Reliability & Risk Management: Shifting from “guessing” to using data-driven SLOs to prevent viral outages.

- Scalability: Managing growth economically by ensuring human effort doesn’t have to double just because the user base doubles.

- Complexity Management: SRE provides the framework to handle modern, distributed microservices that are too complex for traditional “Ops.”

2. Patterns of SRE Adoption

There is no “one size fits all” for SRE. Organizations typically follow one of these models:

- Consulting: Experts give advice; the Dev team still owns everything. (Low shared responsibility).

- Embedded: SREs sit inside the Dev team. They co-develop and eventually share the on-call load. (High shared responsibility).

- Platform: SREs build the “paved road” (cloud/tooling). They own the environment, but have limited involvement in the specific application code.

- Slice and Dice: SREs own specific layers (e.g., just the database or just the network).

- Full SRE: The ultimate goal. Reliability is everyone’s job, and everyone shares the pager.

3. Blameless Postmortems

A postmortem is a document that records an incident, its impact, the actions taken to mitigate it, its root cause, and the follow-up actions.

- Goal: To learn, not to punish. If you punish people for mistakes, they will hide them.

- Key Pillars:

- Incident Criteria: Clear rules on when a postmortem is required (e.g., “Any outage affecting >1% of users”).

- Root Cause Analysis: Focusing on process and system failures, not human error.

- Wide Sharing: Posting the results internally so the whole company learns from one team’s mistake.

- Actionable Follow-ups: Every postmortem must result in a ticket to fix the system.

4. SRE and Scale

SRE allows an organization to grow in three dimensions without breaking:

- Platform Growth: Automation handles clustering and monitoring as the infrastructure expands.

- Scope Growth: SRE tools and practices are standardized across different departments.

- Ticket Growth: By automating repetitive tasks and enabling Self-Service, SREs keep the ticket volume manageable even as the company grows.

5. Sustainable Incident Response

To prevent burnout, SRE incident response must be sustainable.

- The Golden Rules (Google): * Maximum 50% Toil.

- Maximum 25% On-call time.

Section 8: SRE, Other Frameworks, The Future

1. SRE + Agile: Reliability as “Done”

- The Connection: SRE brings “Production Readiness” into the Agile Definition of Done.

- The Practice: SRE backlogs (toil reduction, automation) are treated like feature backlogs. Daily stand-ups and retrospectives are used to prioritize reliability just as much as new features.

2. SRE + ITSM/ITIL: Automation of Process

- The Connection: While ITSM (ITIL) provides the “rules” and predefined processes, SRE provides the automation to execute them.

- The Practice: SRE turns manual Change Management and Incident Management into automated, scalable workflows with digital audit trails, removing human error from compliance.

3. SRE + Value Stream Management (VSM): Business Alignment

- The Connection: VSM looks at the flow from “Idea to Customer.” SRE ensures that the “Customer” part of that flow is actually reliable.

- The Practice: SREs use VSM data to align their technical efforts (like latency reduction) with actual business outcomes and customer satisfaction.

4. SRE + Platform Engineering: The “Paved Road”

- The Connection: Platform Engineering builds the foundation (IDPs, automated infra); SRE builds the reliability on top of it.

- The Synergy: Reduced Toil: Platform teams build self-service tools so SREs don’t have to manually provision resources.

- Standardization: A unified platform makes it easier for SREs to implement “best practice” monitoring and security across the whole company.

5. The Evolution: Specialization & Trends

SRE is no longer just a generalist role; it is branching into specialized domains:

- Specialized Roles:

- NRE (Network Reliability Engineer): Applying SRE to complex software-defined networks.

- DBRE (Database Reliability Engineer): Focusing on data integrity, scaling, and performance at the data layer.

- **Key Trends:

- Failure as Normal:** Using Chaos Engineering to intentionally break things to learn how they fail.

- Automation as a Service (AaaS): Leveraging cloud-native tools (like managed CI/CD) so SREs don’t have to manage the “tooling for the tools.”

- Cloud Dominance: Relying on self-healing cloud primitives (Auto-scaling, Managed K8s) to act as the first line of defense.

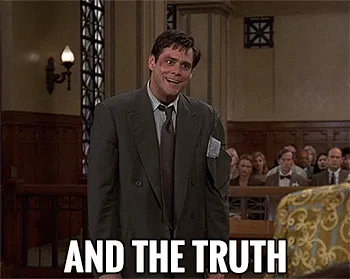

⚡ The Moment of Truth

The exam arrived on Friday. Unlike the Terraform exam, where I felt the “Overthinker” taking over, this felt controlled.

The questions fell into two categories:

- Level 1 (Direct): Fact-based. If you knew which slide to look at, you had the answer in 20 seconds.

- Level 2 (Applied): These tested your understanding of the “SRE Mindset.” You can’t “find” these answers; you have to know them.

📈 The Result: 98%

I took the exam Friday. Because of the weekend, I had to wait. By Tuesday, the email arrived.

Score: 98%.

That’s the relief. Not just because I passed, but because I proved that focused, disciplined hours beat “long hours” every time. You don’t need a whole day to learn SRE; you just need a quiet house and a plan.

🎬 Closing Thoughts

Terraform was about the State of the Code. SRE is about the State of the Service.

To my fellow parents in tech: the 2-hour window is enough. It’s not about how much time you have; it’s about how much of yourself you bring to those two hours.

The terminal is closed. The kids are asleep. And the badge is real.

If you’d like to continue the conversation or connect professionally, you can find me on LinkedIn.